Neural Sparse ANN in OpenSearch

Integrated the SEISMIC algorithm into OpenSearch for sub-3ms sparse vector retrieval at billion-document scale, shipped in OpenSearch 3.3.0.

Overview

Neural sparse search combines the interpretability of keyword-based retrieval with the semantic understanding of neural models. Traditional inverted index algorithms (WAND, MaxScore) assume Zipfian term distributions and short queries — assumptions violated by learned sparse vectors like SPLADE, resulting in poor query latency at scale. To bridge this gap, I contributed to integrating the SEISMIC algorithm (Spilled Clustering of Inverted Lists with Summaries for Maximum Inner Product Search) into the OpenSearch neural-search plugin, enabling efficient approximate nearest neighbor search over sparse vectors at billion-document scale.

The SEISMIC Algorithm

SEISMIC achieves sub-millisecond query latency through three core components:

1. Static Pruning: For each vocabulary coordinate, inverted lists are sorted by value and truncated to the top \(\lambda\) entries, exploiting the “concentration of importance” property — learned sparse models concentrate L1 mass on relatively few coordinates.

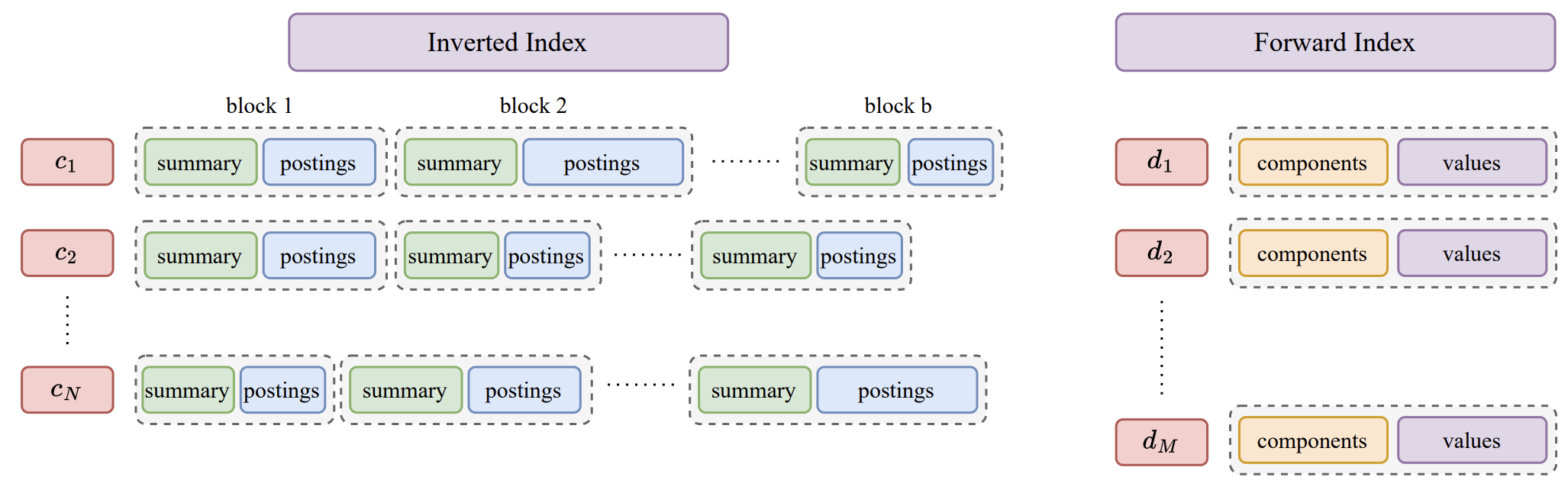

2. Geometric Blocking: Inverted lists are partitioned into \(\beta\) geometrically-cohesive blocks via K-means clustering, grouping semantically similar documents for efficient dynamic pruning.

3. Block Summary Vectors: Each block receives a summary vector containing the maximum value per coordinate from its documents. These summaries are pruned to \(\alpha\)-mass subvectors and quantized to 8-bit values, enabling rapid block evaluation without examining individual documents.

During retrieval, the algorithm selects the highest-value query coordinates, evaluates blocks via their summaries, skips blocks unlikely to improve results, and fully evaluates only promising blocks using exact vectors from a forward index.

My Contributions

- Algorithm Integration: Architected and developed the end-to-end SEISMIC implementation within OpenSearch’s neural-search plugin in Java, including REST API design for cache operations, automatic hyper-parameter framework, and indexing thread number control.

- Benchmarking & Evaluation: Evaluated and compared multiple leading sparse retrieval and ANN algorithms (including inverted index baselines and graph-based approaches) to identify optimal candidates for production deployment. This involved deep analysis of algorithmic trade-offs across latency, recall, memory footprint, and index build time.

- Performance Optimization: Implemented advanced memory management with dense/sparse assignment to reduce cache miss rates, document ID reordering for improved locality, and cache-aware optimization for faster index building — collectively reducing memory footprint by 40% and accelerating mathematical operations.

- Quality Assurance: Built a comprehensive test suite with 50+ unit and integration tests, ensuring correctness across edge cases including empty indices, concurrent access, and cache eviction scenarios.

Results

The feature shipped in OpenSearch 3.3.0, with benchmarks on MS MARCO with SPLADE embeddings showing:

| Metric | Result |

|---|---|

| Query latency | Sub-3ms (single-threaded) |

| Latency reduction | 17–35x vs. exhaustive search |

| Throughput improvement | 18x over baseline |

| Memory reduction | 40% via LRU caching + optimization |

| Index build time | Minutes (vs. hours for graph-based methods) |

The combination of low latency, high recall, modest memory footprint, and fast index construction makes Neural Sparse ANN practical for production sparse retrieval at billion-document scale.