Federated Learning with Adaptive Device Selection

Investigated and benchmarked federated learning algorithms for heterogeneous edge environments at Cornell University, supervised by Prof. Qing Zhao.

Overview

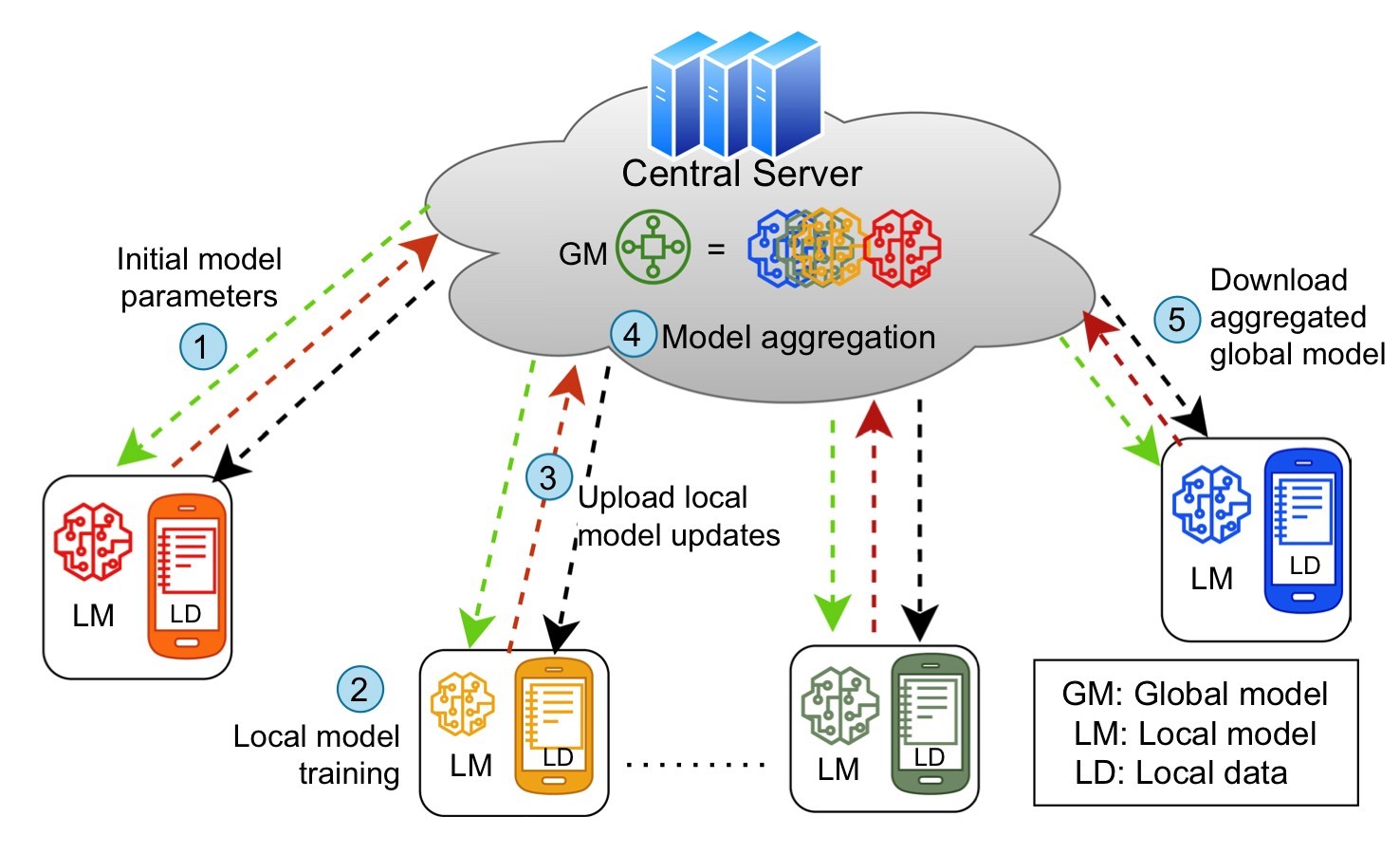

This M.Eng design project at Cornell University (supervised by Prof. Qing Zhao) investigated Federated Learning in heterogeneous environments where edge devices have varying data distributions, computational capabilities, and network bandwidth. Federated Learning enables collaborative model training across distributed devices without sharing raw data, preserving user privacy — but real-world deployments face significant challenges from device and data heterogeneity.

Algorithms Compared

We implemented and compared three major FL algorithms on the MNIST dataset using logistic regression, with 100 communication rounds and 20 local epochs per round (Google Colab, V100 GPUs):

1. FedAvg (McMahan et al., 2017) — The foundational FL algorithm:

- Clients selected uniformly at random each round

- Optimization target: \(\min_w f(w) = \sum p_k F_k(w)\)

- Serves as the baseline for comparison

2. FedProx (Li et al., 2020) — Addresses statistical heterogeneity:

- Adds a proximal regularization term to prevent local models from diverging too far from the global model

- Modified local objective: \(\min_w h(w; w^t) = F_k(w) + \frac{\mu}{2} \|w - w^t\|^2\)

- Designed specifically for Non-IID client environments

3. q-FedAvg (Li et al., 2019) — Prioritizes fairness:

- Re-weights the objective to emphasize poorly-performing clients

- Modified objective: \(\min_w f(w) = \sum \frac{p_k}{q+1} F_k^{q+1}(w)\)

- Parameter \(q\) controls fairness emphasis (larger \(q\) = more weight to high-loss clients)

- Reduces variance among devices’ test accuracy by 45%

Experimental Results

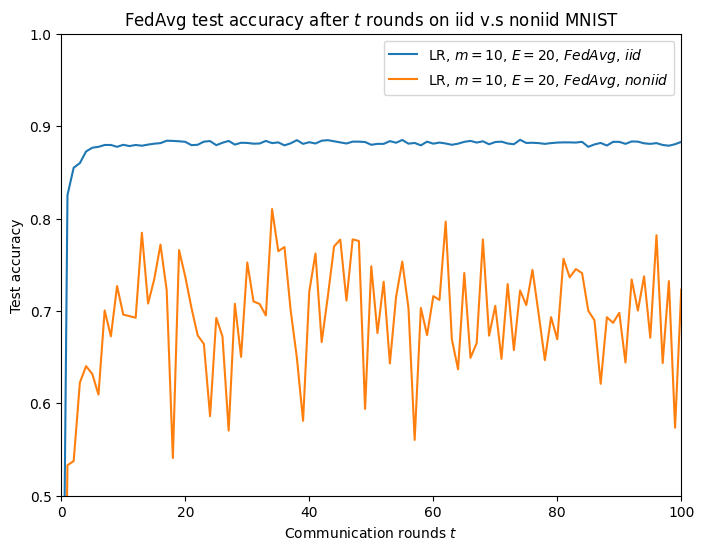

Effect of Data Distribution

- Non-IID data consistently produces worse accuracy than IID, with higher oscillation across communication rounds

- Prior work (136K smartphone study) showed heterogeneity decreases FL accuracy by 9.2% and increases convergence time by 250%

- Increasing client count from 10 to 50 significantly narrows the IID/Non-IID gap, as more clients enable better exploration of the optimization landscape

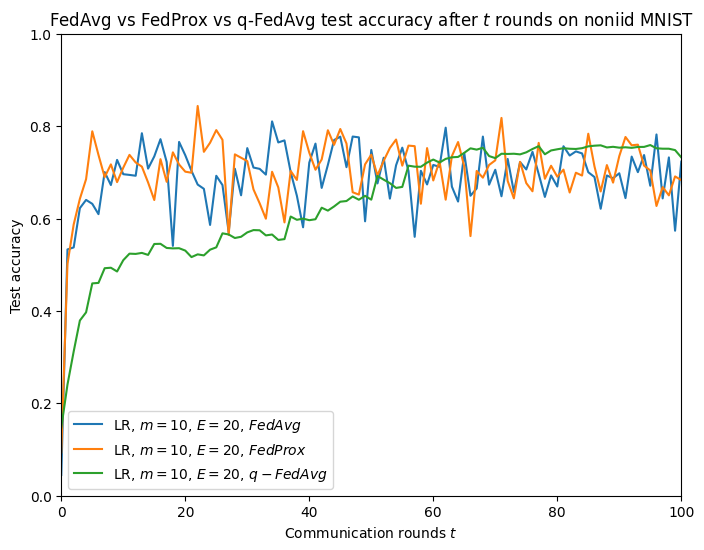

Algorithm Convergence (Non-IID, 10 clients)

- FedAvg and FedProx show similar convergence rates and final accuracy under controlled conditions

- q-FedAvg exhibits noticeably slower convergence, a direct consequence of its fairness weighting that prioritizes underperforming clients

- All three algorithms reach similar final accuracy, but the paths differ substantially

Key Insight: Fairness-Performance Trade-off

q-FedAvg demonstrates an explicit trade-off — it reduces accuracy variance across clients at the cost of slower overall convergence. This has practical implications: applications requiring equitable performance across all users (e.g., healthcare) may prefer q-FedAvg, while latency-sensitive applications may prefer FedAvg/FedProx.

Comprehensive Literature Survey

We reviewed 40+ papers and categorized FL challenges into four heterogeneity dimensions:

| Heterogeneity Type | Description | Key Methods |

|---|---|---|

| Data Space | Feature/label size differences | Data augmentation, GANs |

| Statistical | Non-IID data distributions | FedProx, ISFedAvg, FedPNS |

| System | Varying compute/network capabilities | FedCS, OCEAN |

| Fairness | Biased client selection | q-FedAvg, Eiffel, Oort |

We also evaluated four client selection paradigms: random (diversity), greedy (performance), clustering (similarity grouping), and multi-armed bandit (adaptive exploration-exploitation balance).

Joint work with Joash Shankar (jcs556) at Cornell University, 2023–2024.