Automatic Archived Film Restoration

Deep learning pipeline for automated detection and restoration of degraded archived films using Deep Image Prior and novel algorithms. Bachelor's FYP at HKPolyU.

Overview

This Bachelor’s final year project at The Hong Kong Polytechnic University (supervised by Prof. Kenneth Lam) addresses the preservation of archived films suffering from three primary types of degradation: blotches (dirt/moisture damage), scene flickering (intensity variations from aging), and partial color artifacts (chemical changes in film material). The system combines classical image processing with deep learning to automatically detect and restore degraded frames without requiring any training dataset.

Detection Pipeline

Low-Rank Decomposition for Artifact Detection

For N consecutive frames, the algorithm exploits the observation that artifact pixels are sparse while non-artifact pixels form a low-rank structure across frames:

- Construct matrices \(M_R, M_G, M_B\) (each \(mn \times N\)) from flattened color channels

- Solve: \(\min \|L\|_* + \lambda\|S\|_1 \quad \text{s.t.} \quad M = L + S\)

- Low-rank component \(L\) captures the consistent film content; sparse component \(S\) isolates artifacts

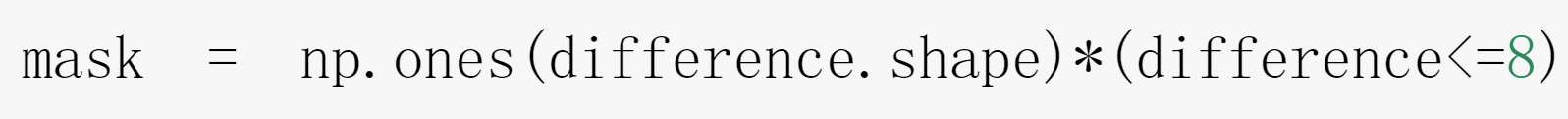

- Binary masks generated per frame: \(S^i_{\text{binary}}(p) = \begin{cases} 1 & \text{if } S^i(p) \neq 0 \\ 0 & \text{otherwise} \end{cases}\)

- False alarm removal by cross-checking R/G/B planes for consistent sparsity patterns

Semi-Transparent Blotch Detection

For blotches that alter but don’t fully obscure content, the system operates in HSV color space:

- Computes Global Peli’s Contrast at multiple resolutions

- Selects optimal resolution using Weber Threshold (0.02)

- Computes Shrunk Saturation to suppress non-blotch intensity variations

- Derives optimal detection threshold by maximizing a visual distortion criterion

Deep Image Prior Restoration

The key insight of Deep Image Prior (Ulyanov et al.) is that CNN architecture itself captures natural image statistics — without any training data. A randomly initialized network converges faster on natural images than on noise, enabling training-data-free restoration.

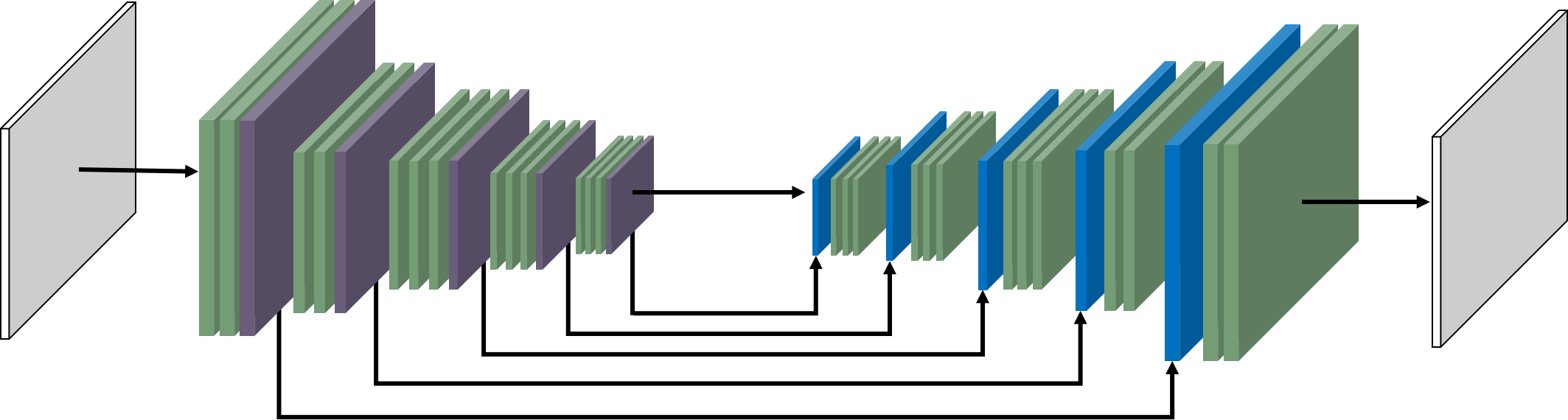

Network Architecture

- Hourglass encoder-decoder with symmetric structure and skip connections for spatial precision

- Gated convolution instead of vanilla convolution: \(O_{y,x} = \phi(\text{Feature}_{y,x}) \odot \sigma(\text{Gating}_{y,x})\) where the sigmoid-gated mask learns to distinguish valid from invalid pixels

- SN-PatchGAN discriminator for stable adversarial training

- Error function for inpainting: \(E(x, x_0) = \|(x - x_0) \odot m\|^2\) where \(m\) is the binary mask

- Optimizer: Adam; iterations: 3000 (tuned for speed/quality balance)

Novel Algorithmic Contributions

1. Pixel Substitution

Leverages temporal correlation between consecutive frames via Farneback’s dense optical flow:

- For each degraded pixel in frame A, find the corresponding pixel in adjacent frame B using optical flow alignment

- If B’s corresponding pixel is NOT degraded (based on the mask), substitute A’s pixel with B’s value

- Feed the substituted frame into Deep Image Prior, giving the network cleaner reference information

2. Alternating Self-Reference

Addresses the “dragging artifact” problem that arises when cross-frame information introduces motion inconsistencies:

- During Deep Image Prior iterations, alternate the reference between:

- The frame with Pixel Substitution applied

- The original frame without Pixel Substitution

- This balances the benefits of cross-frame information with temporal continuity

- Ratio: 1.5:1 (substituted : original)

The combined pipeline: frame extraction → low-rank mask detection → optical flow computation → Pixel Substitution → Alternating Self-Reference Deep Image Prior → quality evaluation.

Experimental Results

Evaluated on real degraded film samples from MeiAh company using NIQE (Natural Image Quality Evaluator), a fully blind quality metric based on natural image statistics (lower = better).

Ablation Study: Algorithm Components

| Configuration | Pixel Sub. | Alt. Reference | NIQE |

|---|---|---|---|

| D (baseline) | OFF | OFF | 39.457 |

| C | ON | OFF | 39.107 |

| A | ON | ON (cross-frame) | 66.448 |

| Best | ON | ON (self-ref) | 34.825 |

- Pixel Substitution alone provides modest improvement (39.457 → 39.107)

- Cross-frame Alternating Reference causes severe dragging artifacts (NIQE = 66.448)

- Alternating Self-Reference resolves the dragging problem and achieves the best NIQE of 34.825

Manual Setting Results

- Simple artifacts (black scratch, static background): NIQE improved from 22.775 → 22.293

- Complex artifacts (irregular color distortion, dynamic scene): NIQE degraded from 22.630 → 52.384, revealing Deep Image Prior’s limitations with complex depth-of-field scenes

Future Directions

- Integration with Meta’s Segment Anything for semantic-aware mask generation

- ML-based detection algorithms to reduce false alarm rates

- Domain-specific NIQE models fine-tuned on film restoration tasks